Evidence Intelligence

Your evidence tells the story. Nquiry helps you hear it.

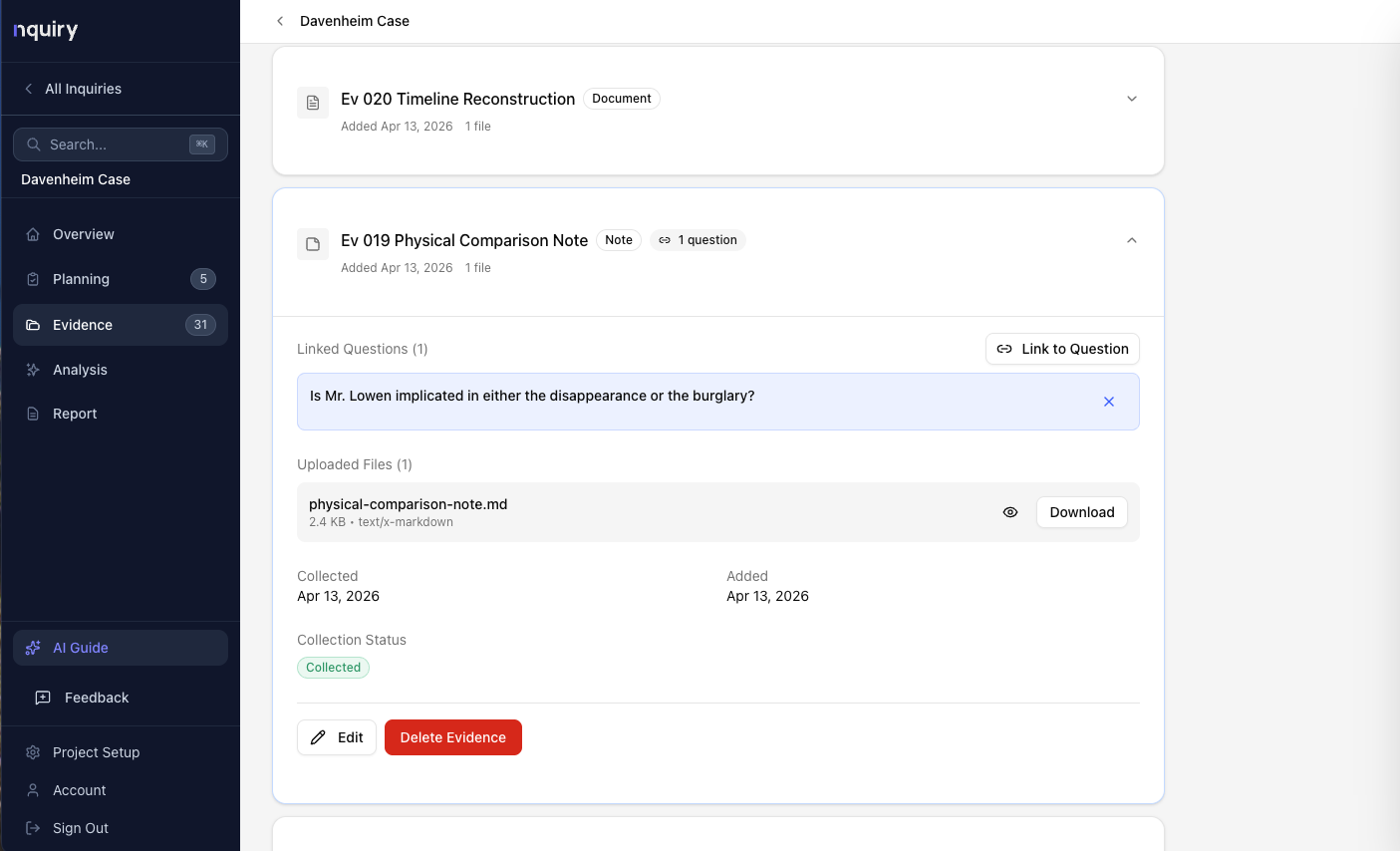

When you upload evidence to Nquiry, we don't just store files in a folder. We process every document so that its meaning — not just its words — becomes searchable.

Here's the reality of modern investigations: you collect hundreds of documents — emails, financial records, interview transcripts, policy manuals, field notes. Every piece matters. But the human brain can only hold so much, and keyword search only finds what you already know to look for.

When leadership asks “did you consider all the evidence?” — you say yes. But you're not 100% sure. You can't be. Not across 200 documents. Nquiry changes that.

What Changes

Three capabilities that keyword search can never provide.

Discovering Connections You Didn't Know Existed

With keyword search, you only find what you thought to look for. Search “overtime” and you find documents containing “overtime.”

With Nquiry, you also find the interview passage where someone said “I felt pressured to stay past my shift.” You didn't search for that phrase. But it's conceptually connected — and Nquiry surfaced it.

Measuring Your Evidence Coverage

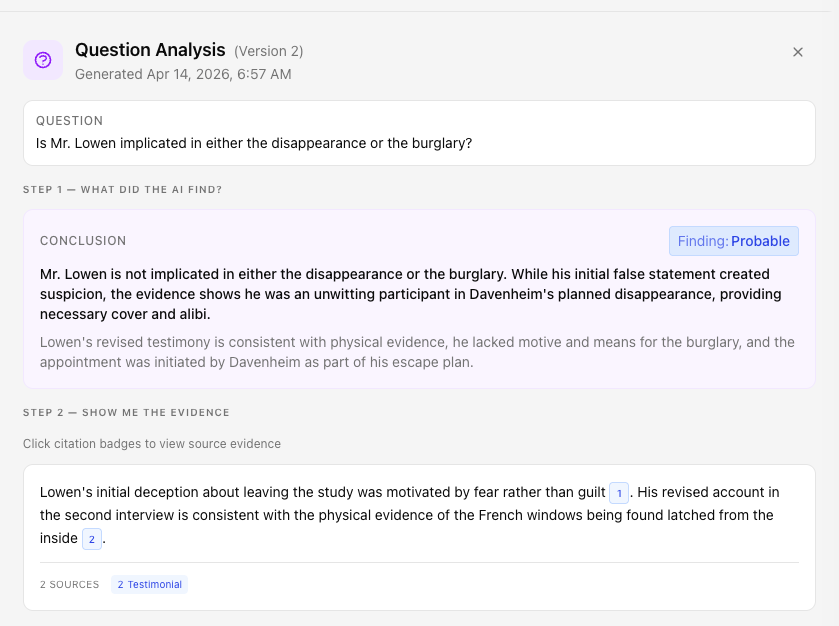

Every retrieved passage comes with a relevance score — a concrete number representing how closely it relates to your question.

You can see that Question 3 drew evidence from 8 of your 12 sources, while Question 1 relied heavily on just 2. You can now measure your evidence coverage, not just assert it.

A Defensible Audit Trail

When leadership asks “what evidence informed this finding?” — you don't rely on memory.

Instead: “Here are the 47 passages above the relevance threshold that informed this finding. They came from 8 different sources. Here's the full audit trail.”

Under the Hood (Just Enough)

What happens when you upload a document.

You upload evidence

PDFs, Word documents, spreadsheets, images, text files. Drag and drop. Nquiry extracts the text automatically.

Nquiry processes the meaning

Every passage is converted into a mathematical representation of what it means — not what words it contains, but what it’s about. No one has to manually tag or categorize anything.

You ask a question

When you run analysis, your question goes through the same process. Nquiry searches the entire evidence collection for passages that are conceptually close to your question.

The AI analyzes what it found

Relevant passages are assembled and sent to the AI along with the evidence evaluation framework. The AI evaluates each piece, weighs the evidence, and produces a finding with citations and a confidence level.

Everything is logged

Which passages were retrieved, how relevant each one was, which were included or excluded — it’s all recorded and available for your review.

Nquiry also uses traditional keyword search alongside meaning-based search. Some evidence — like a specific document number or a person's name — is better found by exact match. Nquiry runs both approaches and combines the results.

Same question. Different paradigms.

“Did employees report concerns about working conditions?”

Traditional Search

Process

Search “working conditions” → 3 hits. Search “concerns” → 12 hits. Search “complaints” → 5 hits. Manually read. Hope you picked the right keywords.

What you find

Only what your keywords match.

Confidence

“I searched for the right terms.”

Audit trail

Your search history.

Nquiry

Process

System finds 47 passages semantically related to “concerns about working conditions” across all documents.

What you find

“I told my supervisor the schedule was unsustainable” (high relevance). “Several of us talked about leaving” (moderate relevance). Passages you never would have searched for.

Confidence

“Here are the 47 passages above the relevance threshold, from 8 sources, with relevance scores for each.”

Audit trail

Full record of what the AI considered, included, and excluded.